Hi! I am a Ph.D. student in Computer Science at Texas A&M University, advised by Dr. Shuiwang Ji; actively collaborate with Dr. Dileep Kalathil and Dr. James Caverlee. My research focuses on post-training of Large Language Models (LLMs) and Diffusion Language Models (DLMs). Through post-training, I aim to improve their reasoning capabilities and accelerate inference via parallel token decoding.

🔥 News

- 2026.06: Our paper, “Data-Efficient Autoregressive-to-Diffusion Language Models via On-Policy Distillation” (OPDLM) is now on arXiv! [paper] [code]

- 2026.05: I have been awarded the silver reviewer award for ICML 2026!

- 2026.04: Our paper, “Learnability-Informed Fine-Tuning for Diffusion Language Models” was accepted at ICML 2026!

- 2026.03: I have been awarded the ICLR 2026 Financial Assistance grant to attend the conference in Rio!

- 2026.01: Our paper, “Curriculum Reinforcement Learning from Easy to Hard Tasks Improves LLM Reasoning” was accepted at ICLR 2026!

- 2025.05: I will be interning as an Applied Scientist at Amazon (Santa Clara, CA) this summer.

- 2025.02: Our paper, “Few-Shot Recognition via Stage-Wise Retrieval-Augmented Finetuning” was accepted at CVPR 2025!

- 2024.06: Our paper, “The Neglected Tails of Vision-Language Models” was accepted to DMLR as ORAL.

- 2024.06: I have begun my Ph.D. at Texas A&M under the supervision of Dr. Shuiwang Ji.

- 2024.02: Our paper, “The Neglected Tails of Vision-Language Models” has been accepted at CVPR 2024!

- 2023.12: I will be presenting our paper, “Prompting Scientific Names for Zero-Shot Species Recognition” at EMNLP 2023.

- 2022.02: I was awarded the Texas A&M Computer Science departmental scholarship.

📝 Publications

LIFT: Learnability-Informed Fine-Tuning for Diffusion Language Models

Shubham Parashar, Atharv Chagi, Jacob Helwig, Lakshmi Jotsna, Sushil Vemuri, James Caverlee, Dileep Kalathil, Shuiwang Ji

- We show that vanilla SFT for diffusion language models (DLMs) overlooks learnability, what and when tokens are learned: rare tokens are hard to learn when most of the input is masked, while common tokens are trivial to when most of the input is unmasked.

- We propose LIFT, an efficient SFT-based post-training algorithm that learns easy tokens at large $t$ and hard tokens at small $t$, aligning training with the information available at each diffusion time step.

- We show that LIFT performs competitvely with RL based post-training for DLMs, with much lesser compute.

Curriculum Reinforcement Learning from Easy to Hard Tasks Improves LLM Reasoning

Shubham Parashar, Shurui Gui, Xiner Li, Hongyi Ling, Sushil Vemuri, Blake Olson, Eric Li, Yu Zhang, James Caverlee, Dileep Kalathil, Shuiwang Ji

- We propose E2H Reasoner, a curriculum-based RL approach that schedules tasks from easy to hard using a probabilistic scheduler, enabling small LLMs (1.5B–3B) to solve tasks they initially failed at zero-shot.

- We provide theoretical convergence guarantees showing curriculum stages require fewer samples than direct learning on hard tasks.

Few-Shot Recognition via Stage-Wise Retrieval-Augmented Finetuning

Tian Liu, Huixin Zhang, Shubham Parashar, Shu Kong

- We retrieve open-world data for solving few-shot recognition, and propose stage-wise training to mitigate the imbalanced distribution and domain gap issues.

The Neglected Tails in Vision-Language Models.

Shubham Parashar, Zhiqiu Lin, Tian Liu, Xianjue Dong, Yanan Li, Deva Ramanan, James Caverlee, Shu Kong

- Our study is the first to reveal bias in popular VLMs like CLIP due to long-tailed training data.

- To mitigate the bias, we propose a novel prompting method and retrieval augmented strategy.

- Both our methods achieve a new SOTA and our retrieval augmented method is 100x cheaper on compute resources compared to the previous retrieval augmented strategies.

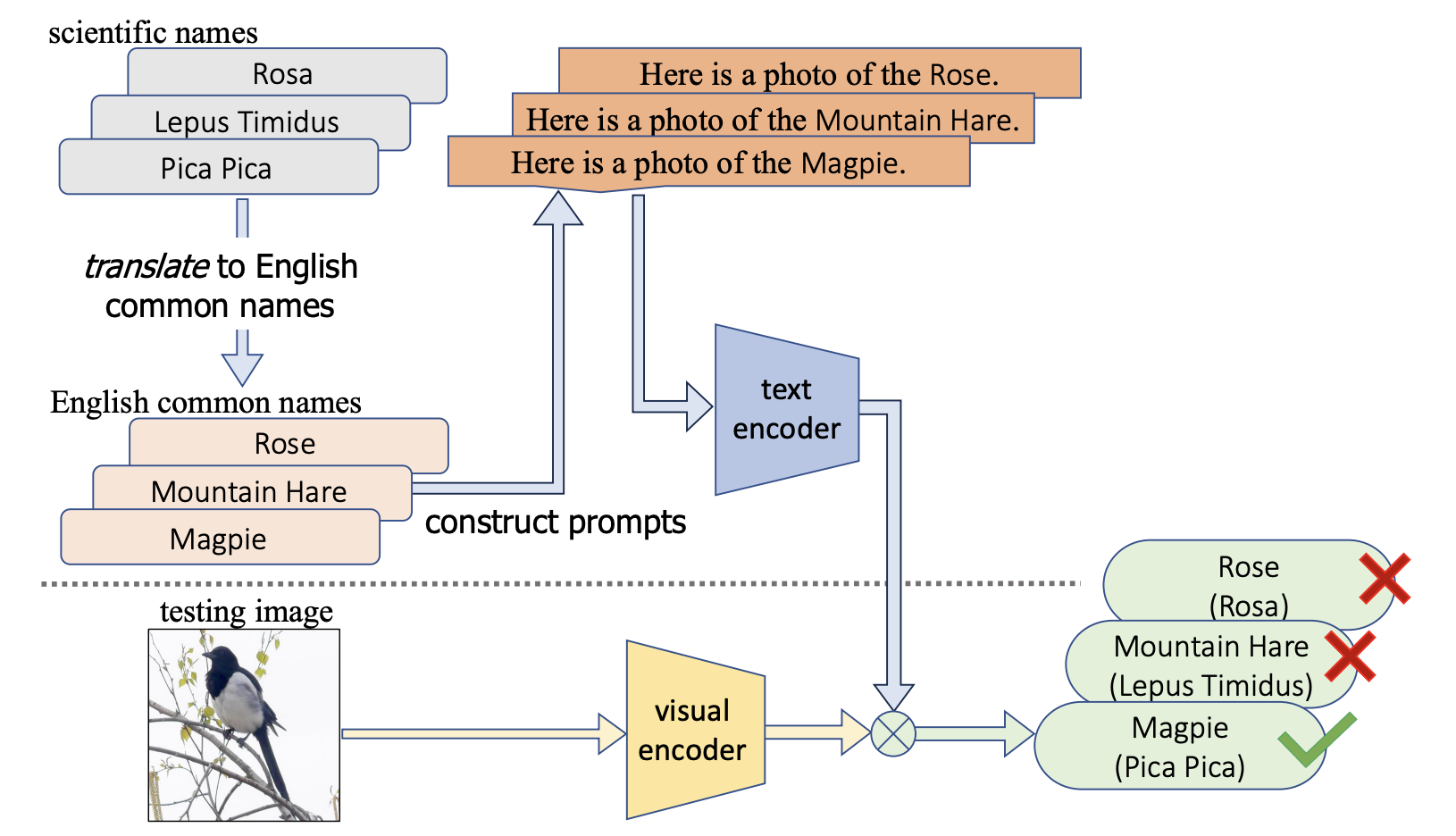

Prompting Scientific Names for Zero-Shot Species Recognition

Shubham Parashar, Zhiqiu Lin, Yanan Li, Shu Kong

- We propose an embarassingly simple prompting method that boosts the zero-shot accuracy of VLMs on 4 fine-grained species recognition benchmarks by 2-5x.

- Facial identification using Haar Cascading with BRISK, Dhruv Dixit, Shubham Parashar, Aashay Gondalia, Animesh Sengupta, M Sivagami, ic-ETITE 2020

- IoT-based healthcare monitoring system for war soldiers using machine learning, Aashay Gondalia, Dhruv Dixit, Shubham Parashar, Vijayanand Raghava, Animesh Sengupta, Vergin Raja Sarobin, IcROSMA 2018

📝 Preprints

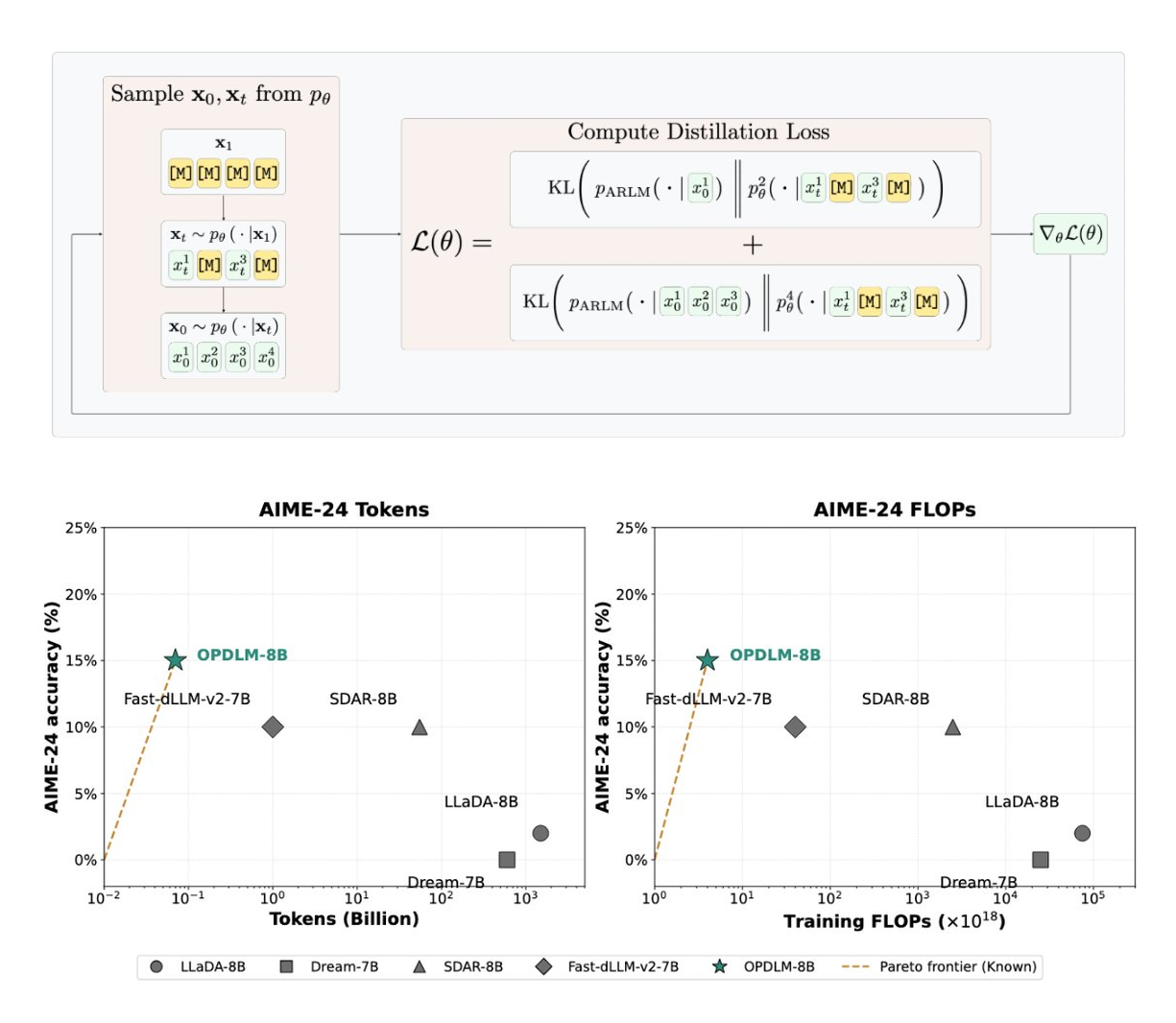

Data-Efficient Autoregressive-to-Diffusion Language Models via On-Policy Distillation

Shubham Parashar*, Xingyu Su*, Jacob Helwig*, Atharv Chagi, Lakshmi Jotsna, Degui Zhi, James Caverlee, Dileep Kalathil, Shuiwang Ji

*Equal contribution

- We frame autoregressive-to-diffusion conversion as post-training: a student diffusion LM is initialized from a base ARLM and trained on its own on-policy trajectories, with the frozen ARLM acting as its own teacher via a KL objective.

- We us On-Policy Self Distillation to close the train-inference gap in DLM training, reaching competitive performance at 1-3 orders of magnitude less training compute, with no DLM pretraining.

- We release models from 0.6B to 8B, along with code and training data.

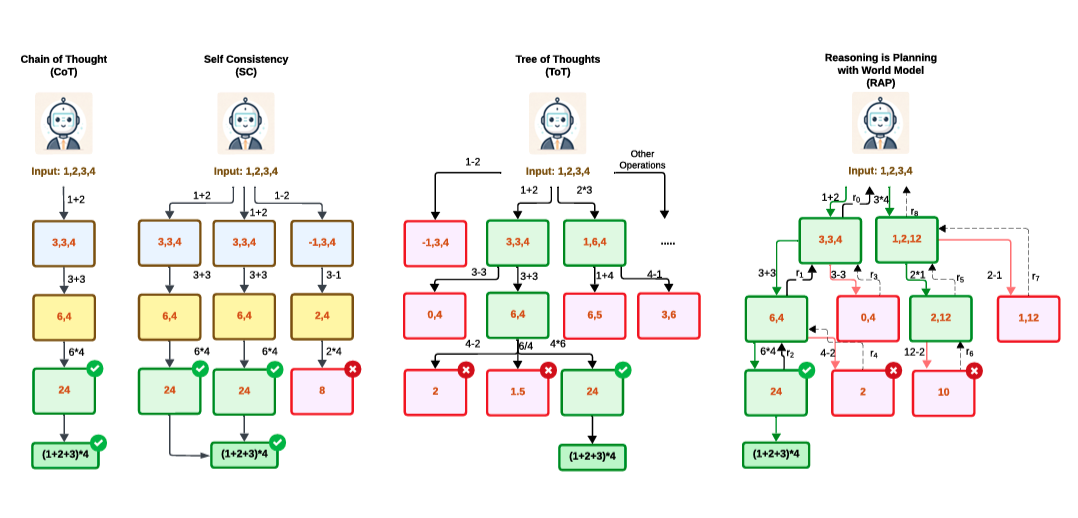

Inference-Time Computations for LLM Reasoning and Planning: A Benchmark and Insights

Shubham Parashar, Blake Olson, Sambhav Khurana, Eric Li, Hongyi Ling, James Caverlee, Shuiwang Ji

- We construct Sys2Bench, a comprehensive benchmark evaluating inference-time techniques across 11 diverse tasks spanning arithmetic, logical, common sense, algorithmic reasoning, and planning.

- We reveal that simply scaling inference-time compute does not consistently improve performance, highlighting fundamental limitations of existing methods.

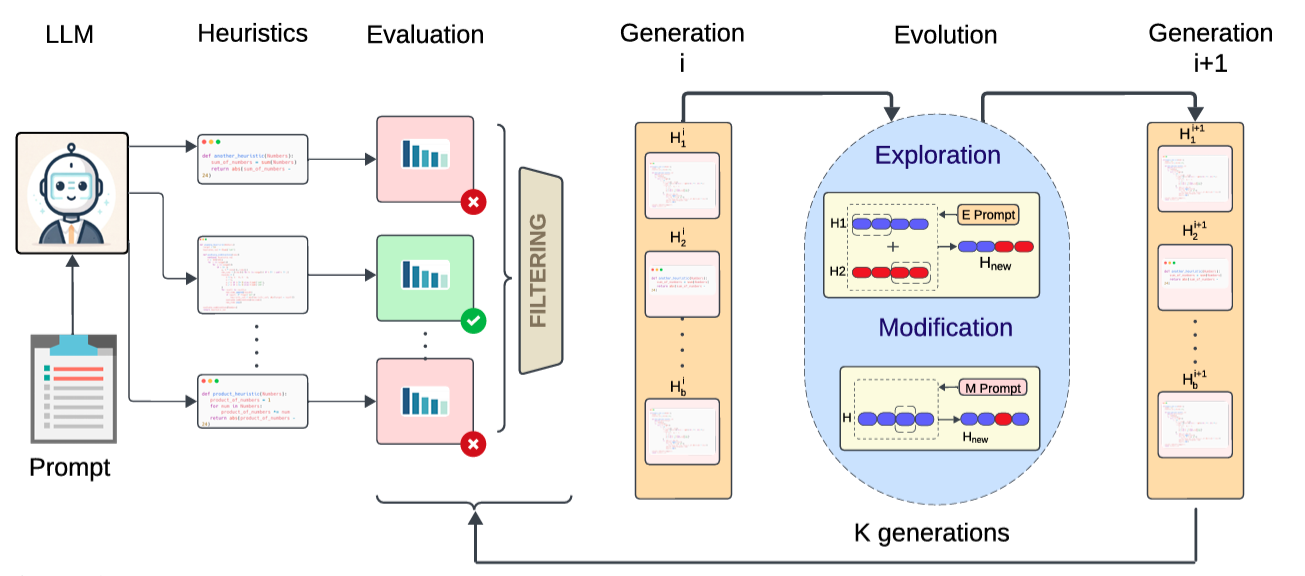

Complex LLM Planning via Automated Heuristics Discovery

Shubham Parashar, Hongyi Ling, Sambhav Khurana, Blake Olson, Anwesha Basu, Gaurangi Sinha, Zhengzhong Tu, James Caverlee, Shuiwang Ji

- We propose AutoHD, which prompts LLMs to generate explicit heuristic functions as Python code to guide inference-time search, removing reliance on unreliable self-verification.

- AutoHD refines heuristics functions through an evolutionary process, which are then used for inference time search.

🎖 Honors and Awards

- 2026: Silver Reviewer Award - International Conference on Machine Learning (ICML) (awarded to Top 25% of ~18,000 reviewers)

- 2026: Financial Assistance Award — International Conference on Learning Representations (ICLR) (awarded to ~325 of ~15,000 authors, ~2.2%)

- 2024: Best Reviewer Award — Annual Conference on Neural Information Processing Systems (NeurIPS)

- 2024: Travel Award — Conference on Computer Vision and Pattern Recognition (CVPR)

- 2023: Travel Grant — CSE@TAMU

- 2023: Department Scholarship — CSE@TAMU

📚 Teaching & Service

Teaching

- 2024: Teaching Assistant — CSCE 421: Machine Learning (Texas A&M)

- Spring 2024: Grader — CSCE 636: Deep Learning (Texas A&M)

- Spring 2023: Grader — CSCE 642: Deep Reinforcement Learning (Texas A&M)

Professional Service

- 2026: Volunteer - International Conference on Machine Learning (ICML)

- 2026: Reviewer — ACM Computing Surveys (CSUR)

- 2026: Reviewer — Transactions on Pattern Analysis and Machine Intelligence (PAMI)

- 2025: Reviewer — Transactions on Machine Learning Research (TMLR)

- 2025, 2026: Reviewer — International Conference on Machine Learning (ICML)

- 2025, 2026: Reviewer — International Conference on Learning Representations (ICLR)

- 2024, 2025: Reviewer — Annual Conference on Neural Information Processing Systems (NeurIPS)

- 2024: Reviewer — Data-centric Machine Learning Research Workshop @ ICML

- 2024: Organizer — Visual Perception via Learning in an Open World Workshop @ CVPR

- 2024: Reviewer — What is Next in Multimodal Foundation Models Workshop @ CVPR

Mentoring

- 2025–Present: Lakshmi Jotsna Madhavarapu — Incoming Data Scientist Intern @ Capital One

- 2025–Present: Atharv Chagi — Incoming Software Engineer Intern @ Texas Instruments

- 2024–2025: Blake Olson — MS CS @ UT Austin

- 2024–2025: Eric Li — Junior @ Texas A&M University

📖 Education

- 2024.06 - Present, Ph.D. in Computer Science, Texas A&M University, College Station, Texas

- 2022.08 - 2024.05, M.S. in Computer Science, Texas A&M University, College Station, Texas

- 2015.07 - 2019.05, Undergraduate, Vellore Institute of Technology University, Chennai, India